Last modified:

Jazzy Beach Critters is a proof of concept demonstration of functional models for real-time music generation to a game scene. It is described in a short paper originally from AISB 2020’s Symposium on Computational Creativity and presented at the rescheduled AISB event in 2021.

AISB CC materials for Jazzy Beach Critters: paper | slides

Interactive Web Demo

URL: http://www.musicaresearch.org/MusicalCritters/index.html

The online demo works best on the new Microsoft Edge (the Chromium based version) on Windows 10. Chrome on Mac and Windows is also good, but the audio may not load immediately and you may initially hear a pause followed by a pile of notes that sorts itself out after a while (just be patient for a few seconds if this happens). Older versions of Microsoft Edge and Android/iOS devices have known compatibility issues. Please be aware that the web demo now uses part of the same generative music back end as another, ongoing research project (MUSICA). Because of this, functionality may evolve over time and bugs may arise as things change.

Things you can do in the online interactive demo:

- Feed, pet, or poke the critters (first three buttons left to right) to move them around and change their moods.

- Click the beach ball to change the overall style.

- Trade solos with the crab (rightmost button). A keyboard will rise out of the sand in the right corner. If the crab is over there, you might want to lure him out of the way with some food first so you have room to play.

- The spacebar also does something (press it a few times and see).

Video Demos

Video demo of original version from 2020:

Demonstration of a newer, solo-trading feature that is now in the online demo too:

About Jazzy Beach Critters

Jazzy Beach critters was an exploration of how soundtracks might be generated at the score level in games (vs simply cross-fading between pre-rendered audio clips). There are two kinds of sound in this demo: sound effects that are in response to critter/user actions and generated music. Each critter represents a musical part (solo, harmony, or bass) and emits notes corresponding to the notes played. The music produced by the group will change if the critters’ moods change, but it does so while preserving harmonic and metrical coherency. The user can interact by petting a critter (makes it happier), poking a critter (makes it angrier), dropping food for critters to eat, and clicking on the beach ball to change the overall style. Happy critters will play in key with each other while angry critters will become chromatic/atonal.

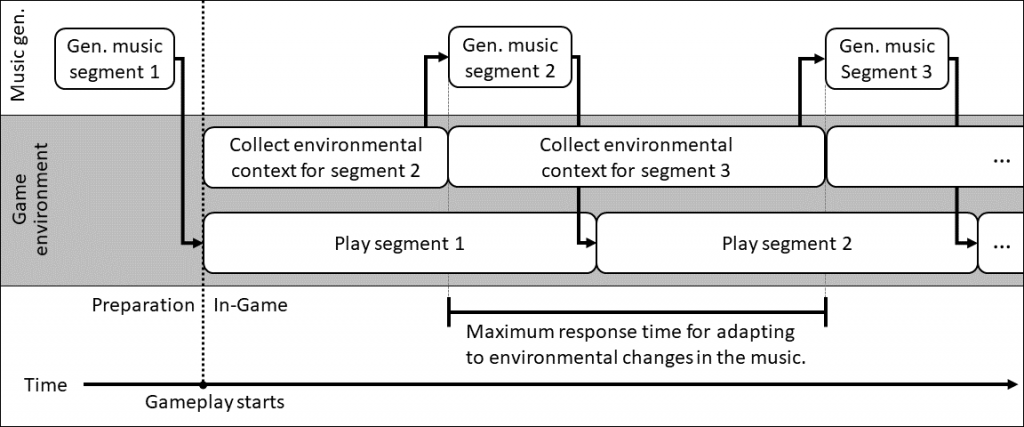

Jazzy Beach Critters was a collaboration with Christopher N. Burrows, who designed the game scene with Unity and C#. The generative algorithms are implemented in Haskell. Music is generated at the level of individual notes, which trigger single-note samples within the Unity framework. All of the music generated is stochastic, and all sound synthesis takes place within the game in real-time. Unlike a number of other procedural soundtrack implementations for games, there are no pre-recorded or pre-composed musical passages in this scene. The communication between the game environment and the music generation algorithms is also bidirectional, meaning that the music can influence game events (as evidenced by the critters producing notes in sync with the music) in addition to game events influencing the music.

Generative Models for Music

The models for generative music are derived from my existing work on generative jazz and other interactive music, which are also part of the MUSICA Project. The same models have been used to produce a number of stand-alone compositions as well as to create interactive systems for live performance. This is a more recent example of solo trading where the computer analyzes and responds to the users solos in a more complex way:

Because the same models have been used for real-time interactive musical systems where the machine responds to a human musician’s melodies, it would be possible to extend something like Jazzy Beach Critters to allow the user to interact more deeply with the soundtrack. For more information on these generative models for music, see the following:

- My page on Generative & Interactive Jazz, which has additional videos and demos of musical systems using this approach.

- A Functional Model of Jazz Improvisation – a paper presented at the 2019 Workshop on Functional Art, Music, Modeling, and Design at ICFP. Slides are available as Powerpoint with audio (pptx) and PDF.

- My Soundcloud playlist of algo-jazz, featuring music made both partially and fully using the same models with more complex algorithms for individual parts. The Cube Squared is an example of a fully computer-made performance of a lead sheet that uses generative algorithms very similar to those in Jazzy Beach Critters but with different instruments and more parts (such as hand drums).